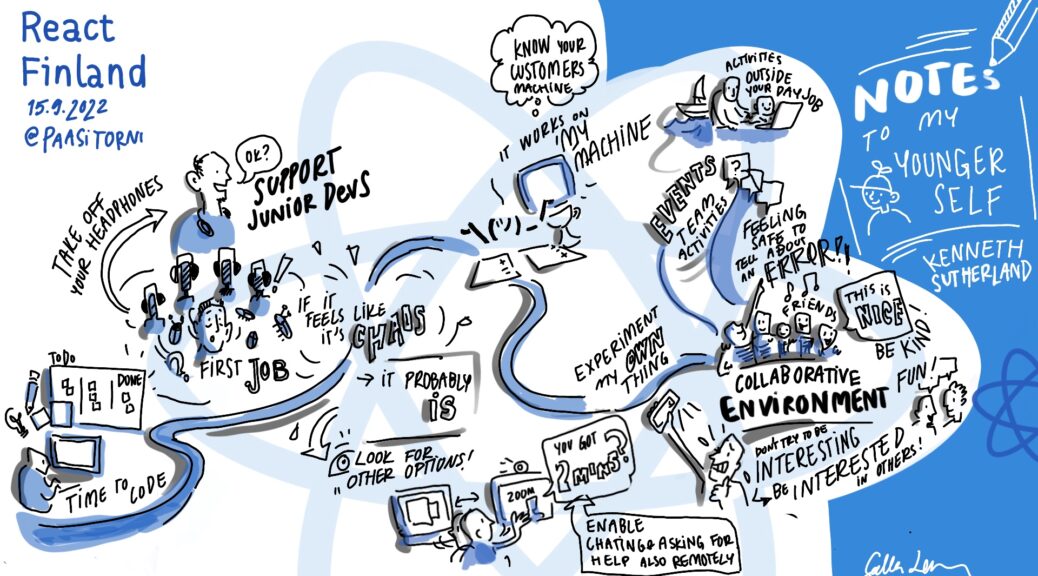

How to get a big break – Notes to my younger self.

Getting into the positive territory of my talk! A turn for the worse, ended up being a turn for the better! Why, how, this is what happened and how I got my ‘big break’.

This is a direct carry on from the previous post and is as the others have been based on my talk that was given at the Finland React JS conference. If you’ve read the previous post, it wasn’t a terribly happy ending. I did create a good number of items, but over time that one main item which was a failure ultimately meant that more work wasn’t coming through. Due to previous experiences, what I could see happening and also the fact that I was really enjoying messing around around with Adobe Flex/Actionscript I took matters into my own hands.

Direct Action

Work was drying up, getting zero direction from management, even after asking about creating work for the client that I knew they could use – after all why not give them something when I wasn’t working on anything. Nope…

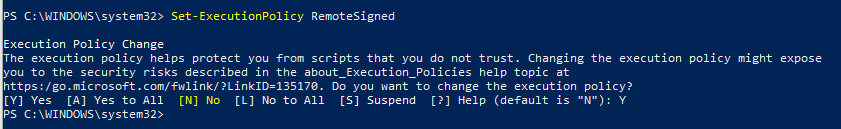

So time to take matters into my own hands. I was busy doing little demos, experimenting with new libraries, new techniques, answering questions on Stack Overflow, while also reading some common questions and creating examples based on those questions. What did I do with these demos/snippets? Well I created a simple WordPress blog (yes this blog) and every now and then I’d put up my examples and source code.

This wasn’t done to get coverage, but purely to help share my knowledge. In turn my actions on sharing my knowledge allowed me to extend my knowledge. My site way back then used to be rather popular – I even made a little bit of money at it via Google ads and some free merchandise! Which was a nice bonus when you expected nothing.

The Point

What am I getting at. Well, by experimenting, keeping things small and simple allowed me to learn the intricacies of the language, learn the lifecycle of the flashplayer, find out where the memory leaked, the add-ons, as well as the latest and greatest releases. This really mattered.

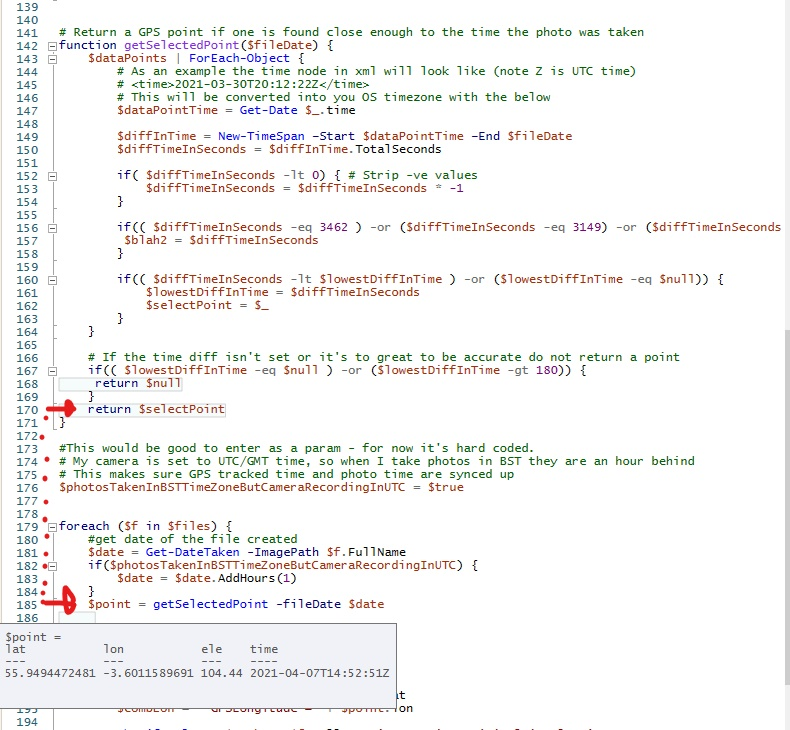

In todays market, this would be like me taking the latest hooks coming out in React 18 and creating demos against each one. Comparing it to what went before. Figuring out where and when lifecycle methods were being called and why. So dig in, create the smallest of demos and tinker around.

The Breakthrough

So not to far down the line, the inevitable happened and redundant once more. Even though it wasn’t the first time and not a huge surprise, it doesn’t make it any easier. While hunting for jobs, there were zero in my local area. Quite a few down south in London and in the end I got hired by a high end consultancy company who worked with a range of leading banks.

While I can’t say for sure why they exaclty hired me, I know my blog was a big selling point of my coding abilities (yes, it’s very much lapsed now – this was around 15 years ago) and when it came to the interview as I now knew the code framework inside and out, then that certainly got me through multiple stages in the interviews.

In the end, each week I’d fly to London on the Monday morning, and fly back home on the Friday. I was renting a place in London while there and even with those extra expenses I was still coming home with way more than I’d ever done in the past – not to mention it was great fun being involved in such a large project. But there was a cost, but that’s for another story…

Now that I’d entered the contract market, that gave the outlook on doing work a completely different feel. Again that’s for anther story. Something that for those that can, I’d certainly recommend as long as you are aware of it’s drawbacks.